In 2022, Microsoft CEO Satya Nadella’s son died at the age of twenty-six from a lifelong battle with cerebral palsy, a neurological disorder caused by birth-related brain damage. When in 2017, Nadella delivered a talk about the use of assistive artificial intelligence for people suffering from disabilities like CP, he was contacted by one his colleagues in Spain. Julián Isla, a software engineer at Microsoft, emailed Nadella out of a sense of resonance, because his own son suffered from a rare genetic epilepsy making him a parent of a child living with disability as well. Like Nadella, Isla was also motivated to think of the role of artificial intelligence in the assistance of other parents who, as he described, were on the “odyssey of diagnosis”.

It took years for Isla’s son, Sergio, to be diagnosed with Dravet syndrome, a condition much rarer than Cerebral Palsy. This made Isla’s experience as a parent both similar to and different from Nadella’s: rather than aiding existing disabilities- something Microsoft had already embarked on with tools like SeeingAI– Isla was looking for the right tools of diagnosis that would ease the experience of parents and patients from misdiagnosis, incorrect treatments, and shorten the painful journey of understanding rare diseases. In his email to Nadella, Isla wrote about harnessing the power of AI to help clinicians speed up the process of precision diagnosis. In January 2025, building onto open-AI’s large language model of GPT-4, Isla and team launched DxGPT (or DiagnosticsGPT) as an experimental research tool which after inputting specific details of the patient (height, weight, age, sex, symptoms) could come up with a hierarchy of possible diagnostic conditions that match the details. The idea of providing a “very precise diagnosis in a matter of minutes” is why Microsoft called it a “disruptive AI solution” in the title of the story published online.

‘Disruptive technologies’ has been a buzzword in the world of scientific and technological innovation. Not only does it celebrate disrupting a certain market or supply chain of a commodity as imminent and good for everyone, it also reifies a belief in the linear progression of modern science. Starkly, however, it is often those whose own livelihoods are not at risk who celebrate disruption. In this piece, I think with AI-assisted diagnostics to suggest that it is not disruption but precision itself that has become an ideal under late capitalism. Taking precision seriously opens us to more contemporary questions: who is precision good for? Who has access to it? How is precision produced? And who bears its cost?

While DxGPT becomes an open source software currently being adopted by over 500,000 healthcare professionals in Europe, U.S., China and India, other AI-assisted diagnostic tools are expanding simultaneously. DeepRare, another platform for the diagnosis of rare diseases developed by researchers at the Xhinhua hospital in Shanghai, has also been in use by 500 institutions and 1000 practitioners. In an era of techno-capitalism when national economies can be reshaped by big tech funding, achieving precision has become a key site of competition. In the case of AI-assisted diagnostics this means making health data sourcing wider in terms of both quantity and location. It also means labeling and annotating this data to train algorithms. Data sourcing and labeling are not only about creating new markets and consumers; they also bring new populations and landscapes within the production process of precision.

The Claim of ‘Disruptive Technology’

The technologists of today who celebrate disruptive technologies owe their ideological perspective to the mid-twentieth century, Austrian economist Joseph Schumpeter. In his 1942 book ‘Capitalism, Socialism, and Democracy’, Schumpeter proposed that the process of ‘creative destruction’ was the central feature of economic growth under capitalism. As opposed to his contemporary, John Maynard Keynes who argued that nations flourish under conditions of economic stability, Schumpeter’s thesis was that innovation comes at the cost of destroying the older technologies, markets and economic processes and is inherently good for the economy in the long run.

Half a century later, Schumpeter’s macro-economic theory was taken up by American business theorist Clayton Christensen (2008) who popularized the notion of ‘disruptive technology’ as a business and innovation challenge. Christensen explained how simpler, cheaper technologies can upend established industry leaders as large companies stay true to their customer base and do not immediately realize the need of new technologies until after they are introduced and improved by smaller companies. In this sense, to disrupt meant to disrupt an established market and the consumer base. To remedy this, Christensen suggested that big companies retain smaller divisions to compete and avoid being out-maneuvered by start-ups.

The claims of disruptive technology and innovation, however, work with massive geopolitical blind spots and by denying the ongoing imperial conditions under which technoscience operates. As generations of postcolonial scholars have argued, innovation hubs are often sustained by labor exploitation in the global South (Mignolo 2011). Innovation, thus, becomes part of what Peruvian sociologist Aníbal Quijano (2000) first described as the ‘colonial matrix of power’ that relies on the persisting control of land, economy, knowledge and gendered sexuality in the colonized world even after formal decolonization, to maintain capitalist hegemony. While AI tools exist at varying scales of complexity and expertise, it is no surprise that the most profit-accumulating platforms are being operated by tech giants in the U.S. and China, namely OpenAI, Anthropic, DeepSeek, and Microsoft.

This colonial matrix of power is complimented by the fact that artificial intelligence is technologically mundane rather than spectacular (Forsythe 2001). AI tools are embedded in existing infrastructures in a way that not only leads to their routinization but also adoption in practices other than what they were originally developed for. In diagnostics, for example, doctors and specialists can use platforms like DxGPT and DeepRare not only for primary screening that will limit their search of a rare disease but also to learn how to communicate with a patient about the possible diagnoses.

It remains to be studied how medical practice itself will change after such recommendations that are system-generated and interact with the situated judgement of the medical specialist in a clinical setting. Yet, it is clear that these nuances cannot be captured by probing the disruptive effects on markets through their expansion to new consumers alone. We need a sharper, and preferably more critical, way of studying how precision technologies mediate human experience, social life and national economies today.

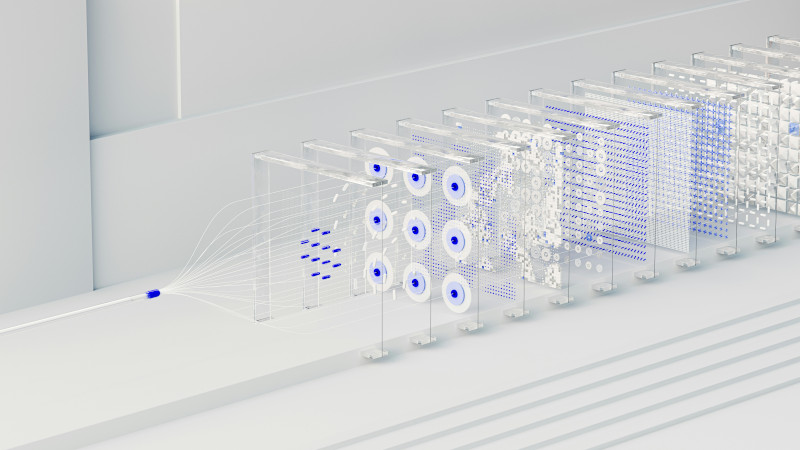

An artistic illustration of a group of computer servers connected in an ‘artificial neural network’, a machine learning model that uses pre-labeled data for natural language processing and object and speech recognition. Sourced from Unsplash

Who Labels Data?

The use of generative AI in diagnostics means that it requires training on large amounts of health data from medical literature, clinical databases and human labeling of this data. Indeed, data labeling and content mediation have grown as lucrative industries in the global South over the last decades. A crucial part of generative AI is the use of “humans in the loop”. Through careful feedback loops, content produced or recognized by AI is reviewed, approved, modified or overridden by a human through which the system may learn.

American AI companies often establish subsidiaries in countries with surplus labour and high rates of unemployment where ‘data workers’ do low-paid low-skilled tasks like annotating images that includes circling objects, distinguishing skin colour and thus racial identities, or identifying and marking vehicles on the road for self-driving cars to be trained. In 2024, CBS news reported on ‘human in the loop’ data workers in Nairobi, Kenya, who filed a lawsuit against Meta and its subsidiary, Sama, for perpetuating “unreasonable working conditions” that caused psychological harm in the work of data labeling. These workers recounted stories of being hired to do a stated job like translation and then soon being offloaded with “worst of the worst content” like weeding out pornography, abuse and videos of suicide and killings. A similar report emerged from India more recently where rural women were hired as AI workers by global tech companies to moderate abusive and pornographic content. Upon first reading the CBS report, it is easy to miss that the same Kenyan workers labeling objects like furniture, vehicles on the road, skin colour, racial identity, and explicit sexual content were also offloaded with “identifying abnormalities in CT scans, MRIs and in X-ray reports”. Questions of skill and expertise become glaring. Medical data annotation remains a comparatively high-stakes, specialized practice that can influence diagnosis and treatment outcomes for future patients. Why, then, should there be a need to hire non-skilled workers for specialized data labeling?

It is here that the idealization of precision under late capitalism meets the pre-existing colonial matrix of power. Precision inherently rests on gathering and comparing large volumes of data. I have written elsewhere (Bhan 2024) on a similar aspiration of precision in genomic medicine. This aspiration rests on the assumption that the more diverse genomic data is in representing ethnic/ racial groups in global genome databases, the more precise medicine will become in the future for each group and individual. AI-assisted diagnostics seems to work through a similar logic. Medical data labelled by non-specialists may not be seamlessly included in platforms like DxGPT or DeepRare. But it will contribute to making the algorithms that train these platforms more precise for screening disease risks. This is a much less speculative statement than it might initially sound.

In India, where I researched on genetic screening and its use in the prevention of rare diseases, there have been numerous reports and studies on the distress of underpaid female voluntary health workers (Pulgam and Satyanarayanan 2021; Bärnreuther 2024). More commonly known as Accredited Social Health Activists (ASHA), these workers are a unique part of India’s public health system, initially formed to take care of child and maternal health needs in rural regions where hospital services are rare. As Priya Goswami recently observed,

“Today, as their role extends onto digital platforms, ASHAs are not only bringing care to the forefront but playing an important role in collecting health data. The data they collect includes family type (caste, class, religion), family size, history, current illnesses, assigned tracking numbers (such as in India’s Mother and Child Tracking System), and biomarkers, among other details.”

While ASHA workers belonging to marginalized caste and class backgrounds become vulnerable to work-related psychological distress, the data they collect is being plugged into AI tools to make screening and diagnostic assessments more precise, as announced at the recent AI Summit in New Delhi.

As AI-assisted diagnostics develops to bridge local contextual data with medical literature and vast amounts of high-skilled medical annotation, we must pay attention to how data sourcing and labeling is distributed across uneven labour conditions. The colonial matrix of power within which low-skilled data work is outsourced to the global South also frames medical innovations in the global North as inherently benevolent. This moral framework is evident when narratives foreground a Microsoft engineer as a parent of a disabled child while “human in the loop” data workers or healthcare workers are perceived as detached individuals escaping unemployment.

Disability and Precision

While industry leaders celebrate disruption, they distract us from asking the more pressing question: who bears the cost of precision being encoded in technologies? Following Diana Forsythe, it is not that AI is spectacular, but that it is hard not to be enchanted by its declared and promised precision. Diagnosis hits our most fundamental will to know as modern humans, to be able to act in time, to prevent and help us not become sick over and over again. When companies like Microsoft draw on the personal experiences of executives raising disabled children, they operate within what disability scholar Joseph Stramondo (2010) called the ‘master narrative of the pitiful disabled person’[1] , a framework that associates disability with only suffering and pity and while obscuring broader structural conditions. The figure of the disabled body becomes a justification for perpetuating any and all kinds of innovations and obscures the fact that disability and disease are not isolated phenomena. Indeed, they are often produced within the very systems that promise to care for them.

AI-assisted diagnostics deserves our attention to follow how families affected by rare diseases might be benefited in a deeply unequal and varied world of health. But it does not need our enchantment with what precision technologies can do for the disabled body. While improving the diagnosis of rare diseases is important, it should not eclipse concern for the digital and healthcare workers, themselves becoming sick in the race towards precision. These humans not only contribute to shortening the diagnostic odyssey but also absorb, in deeply embodied ways, the costs of producing precision.

This post was edited by Contributing Editor Sook Lin Toh.

References

Bärnreuther, Sandra. 2024. “Surveillance medicine 2.0: digital monitoring of community health workers in India.” Anthropology & Medicine, 31(3), 250–264.

Bhan, Samiksha. 2024. “Population in Fragments: Inclusion, Risk, and the Anticipatory Universality of Postcolonial Genomics.” Zeitschrift Für Ethnologie/Journal of Social and Cultural Anthropology 149 (1): 127-146.

Christensen, Clayton M. 2008. The Innovator’s Dilemma: When New Technologies Cause Great Firms to Fail. Harvard Business Review Press.

Mignolo, Walter D. 2011. The Darker Side of Western Modernity: Global Futures, Decolonial Options. Durham: Duke University Press.

Pulagam P, and P.T. Satyanarayana. 2021. “Stress, anxiety, work-related burnout among primary health care worker: A community based cross sectional study in Kolar”. Journal of Family Medicine and Primary Care. 10(5): 1845-1851.

Quijano, Aníbal. 2000. “Coloniality of Power, Eurocentrism, and Latin America.” Nepantla: Views from South 1 (3): 533–580.

Stramondo, Joseph A. 2010. “How an Ideology of Pity Is a Social Harm to People with Disabilities.” Social Philosophy Today 26: 121–34.