From the North in Safad (where my father is from) and Galilee to the South East in Al-Lydd (where my mother is from) and down to Jerusalem and Gaza, the food differs but is united at the same time, through love and history… Palestinian food is found in the home. That is where it all begins. (Joudie Kalla, Palestine on a Plate, 2016)

Food is the most precious part of Palestinian heritage. For Palestinian food not to go extinct, the young have to learn from the old. (Aisha Azzam, Aisha’s Story, film forthcoming 2024)

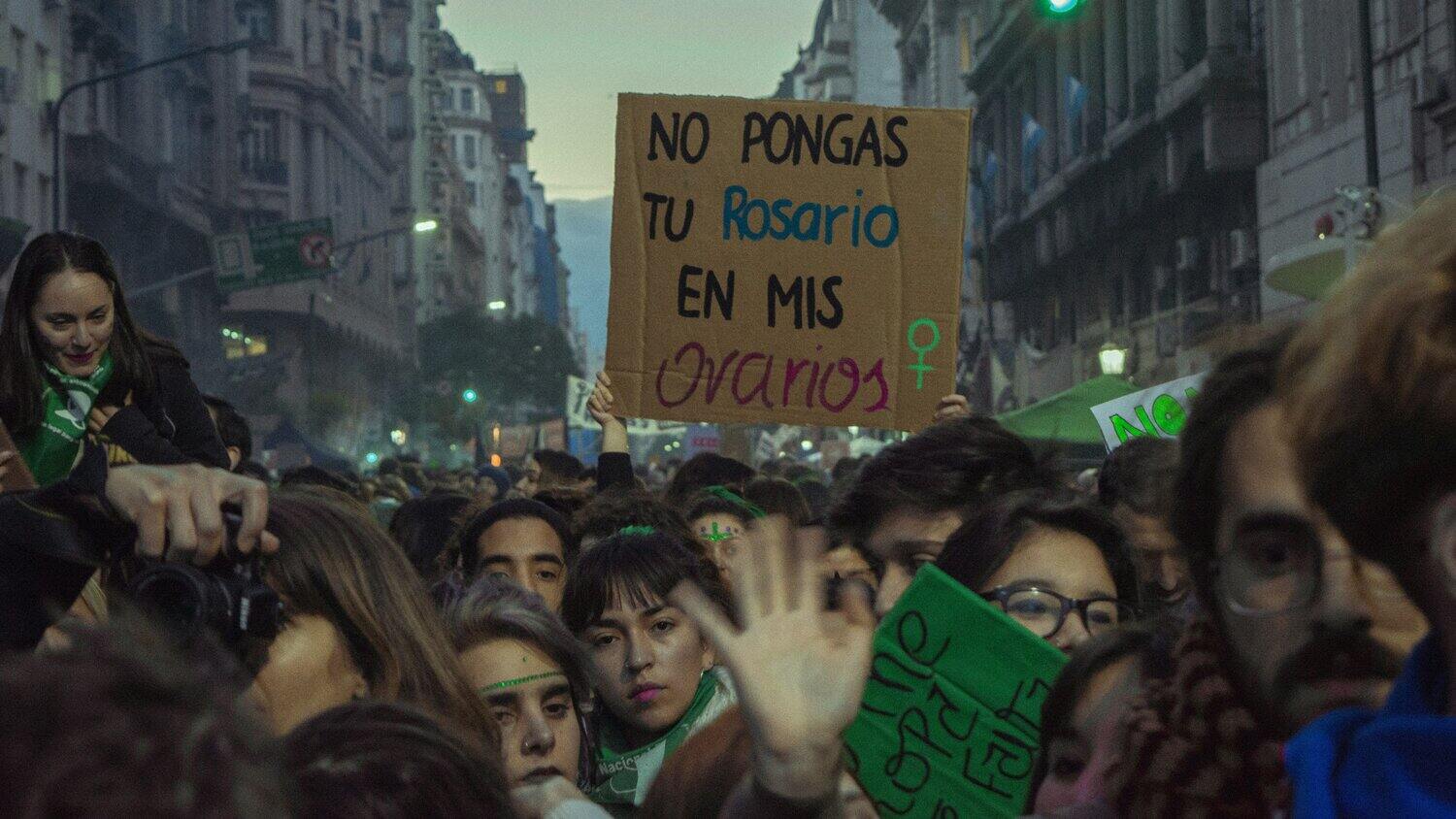

Around the world, millions have taken the streets in support of a free and thriving Palestine in the face of active genocide and the continuance of settler colonial violence. Visible on the streets and all over social media feeds, scattered among flags and keffiyehs, are images of the vibrant watermelon. This trinity of nationalist symbols bear a shared honoring of an ancient yet enduring cultural intimacy with Levantine lands. A cursory search about the history of the Palestinian flag’s colors (black, white, red, and green) leads one down many possible origins and mythologies behind the green portion of the flag. These include but are not limited to representing influential Arab dynasties, peace, Islamic faith, as well as a deep love and appreciation for the olive trees which bloom across the landscape. The keffiyeh, a traditional scarf, embodies similar sentiments entangled in its design. Within its iconic weave, visual histories of Palestinian trade pathways, robust fishing culture upon the Mediterranean sea, and once again, the olive trees. Last is the watermelon, which was used as a covert placeholder for the flag during a period of occupation when the display of the flag itself was forbidden. In essence, at the core of these symbols are Palestinian foodways and culture. (read more...)